Multi-Agent Systems: How AI Agents Collaborate to Solve Complex Problems (2026)

Inside Multi-Agent Systems: How AI Agents Coordinate to Tackle Complex Tasks (2026)

A single AI agent, no matter how capable, faces a ceiling. It processes tasks sequentially, holds a fixed context window, and cannot be in two places at once. Multi-agent systems remove that ceiling entirely. By deploying networks of autonomous AI teams — each agent specialised, each contributing to a shared objective — these architectures solve problems that no individual model could address alone. Understanding how multi-agent systems work in AI is, in 2026, one of the most practically important questions in applied artificial intelligence.

⚠️ Tech Disclaimer: This guide explores 2026 AI trends for educational purposes. AI capabilities and software performance vary by platform; this is not professional, technical, or financial advice. Always verify with certified experts for a critical system

This article provides a clear, educational analysis of multi-agent systems — covering what they are, why single agents reach their limits, how AI agents collaborating on tasks communicate and coordinate, and what real-world deployments reveal about their capabilities. It also addresses the genuine challenges of coordinating autonomous AI at scale, and examines where the future of collaborative AI agents is heading through 2030. Stanford’s AI Index 2024 documents substantial improvements in AI planning and coordination benchmarks [1], directly enabling the distributed AI agent architecture this article examines.

For the broader strategic context — how this shift is challenging the entire app ecosystem — explore our full pillar guide: AI Personal Agents Are Replacing Your Apps Faster Than You Think.

What Are Multi-Agent Systems?

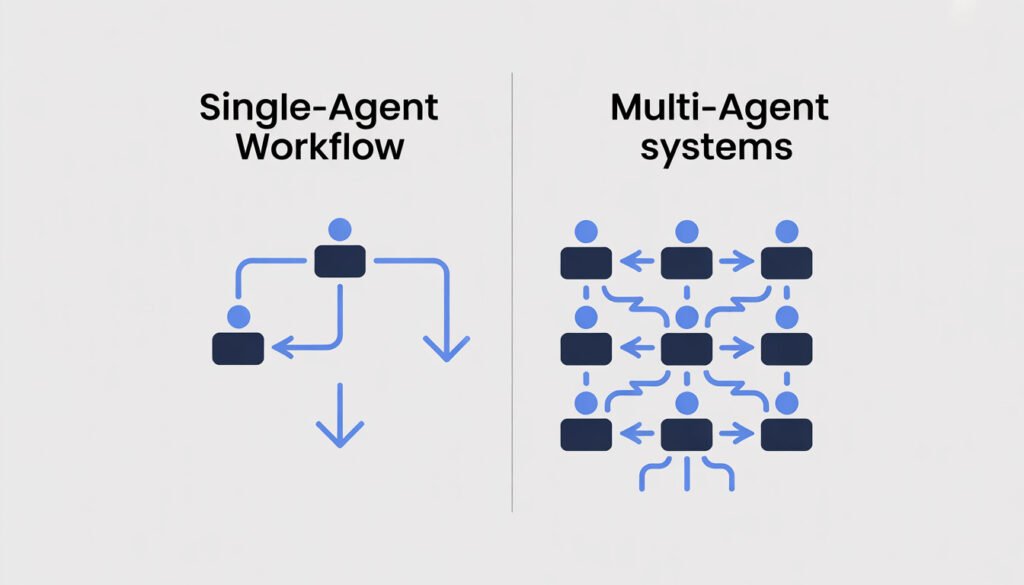

Multi-agent systems (MAS) are architectures in which multiple autonomous AI agents interact, coordinate, and collaborate to achieve shared objectives. Each agent in a multi-agent system possesses its own reasoning capabilities, knowledge base, and action set — but contributes those capabilities toward a collective goal that no single agent could accomplish independently.

The defining characteristic of multi-agent systems is not just the presence of multiple agents — it is the structured interplay between them. Agents in a MAS do not simply run in parallel and ignore each other. They communicate, share knowledge, negotiate task allocation, monitor each other’s outputs, and adapt their strategies based on collective feedback. This capacity for AI agent collaboration is what transforms a collection of individual models into a genuinely intelligent network.

Core Components of a Multi-Agent System

- Agents: Independent software entities capable of perception, reasoning, and action — each with a defined role and capability set.

- Environment: The digital or physical context in which agents operate and through which they interact with external data sources and services.

- Communication protocols: Structured mechanisms enabling agents to exchange task status, knowledge updates, and coordination signals.

- Coordination strategies: Methods for ensuring agents work toward the shared objective without conflicting, duplicating effort, or creating bottlenecks.

- Shared learning mechanisms: Systems through which agents update their models based on collective experience — enabling the network to improve over time.

| 🧠 Knowledge Assessment — Multi-Agent Systems Q1: What is the primary capability that makes multi-agent systems more powerful than single-agent workflows? – A) They require less computing power – B) Multiple agents collaborate, share knowledge, and distribute tasks simultaneously – C) They operate without any internet connection – D) They are limited to text-based processing only Q2: In a multi-agent system, which architecture assigns tasks through a central controlling agent? – A) Decentralised peer-to-peer coordination – B) Blackboard knowledge-sharing system – C) Centralised orchestration architecture – D) Market-based task bidding protocol – Q3: Which communication model allows all agents in a multi-agent system to access shared knowledge from a common repository? – A) Direct peer-to-peer messaging – B) Blackboard system – C) GPU rendering pipeline – D) Static rule database |

✅ Correct Answers:

- Q1 → B: Multiple agents collaborate, share knowledge, and distribute tasks — enabling parallel execution at a scale and speed no single agent can match.

- Q2 → C: Centralised orchestration architecture — a master agent assigns roles and coordinates the workflow across all sub-agents.

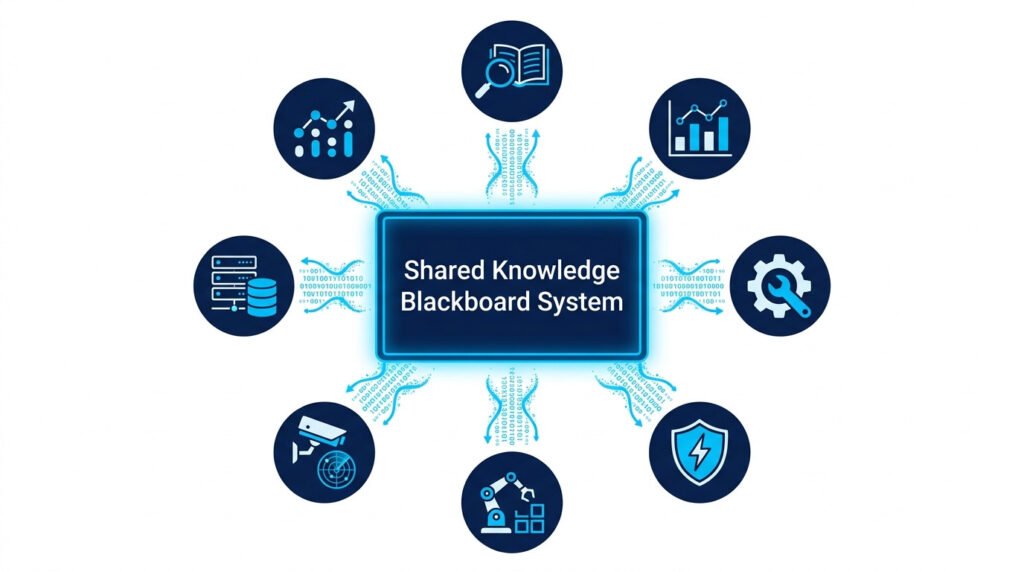

- Q3 → B: Blackboard system — a shared knowledge repository that all agents can read from and write to, enabling collective intelligence without direct messaging overhead.

💡 For more information, explore the complete segments of our AI & Personal Technology Series

Why Single AI Agents Are Not Enough

To understand the value of multi-agent systems, it is necessary to be precise about where single-agent architectures break down. A capable LLM-based agent handles well-scoped, sequential tasks effectively. But the moment a problem requires parallel workstreams, specialised expertise across multiple domains, or real-time adaptation to changing conditions across multiple data sources simultaneously, a single agent hits structural constraints that cannot be resolved by simply making it more capable.

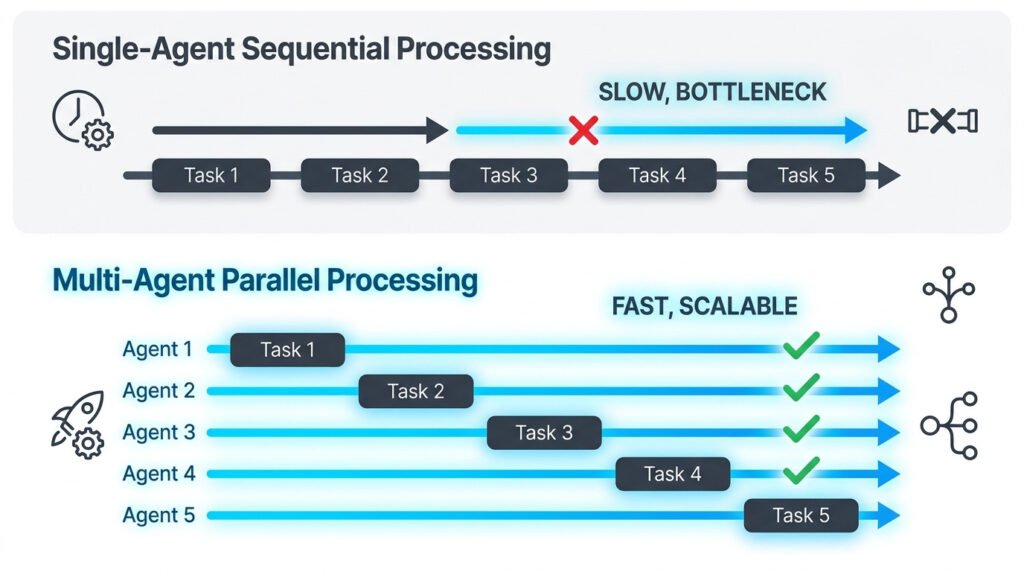

The Sequential Bottleneck

Single agents process one task thread at a time. For a problem that genuinely requires simultaneous action across five independent workstreams — say, monitoring five market segments at once, each requiring different data sources and analytical approaches — a single agent must context-switch between them. Each switch introduces latency, truncates context, and produces outputs that are necessarily less informed than a dedicated agent operating continuously within that workstream.

The Context Window Ceiling

Even the most capable LLMs have bounded context windows. For extended, complex workflows involving large volumes of data across multiple sources, a single agent cannot hold all relevant information in an active context simultaneously. Multi-agent systems resolve this structurally — each agent maintains its own focused context, contributing its output to a shared knowledge layer that the system as a whole can draw from without any individual agent becoming cognitively overloaded.

The Specialisation Gap

A generalist agent produces generalist outputs. For domains requiring deep specialisation — financial modelling, medical data interpretation, legal document analysis — a multi-agent system can deploy purpose-built agents trained or prompted for each domain, coordinated by an orchestrator that synthesises their outputs. The result is a level of domain depth that a single generalist model cannot match. McKinsey’s 2024 analysis identifies specialised AI agent collaboration as one of the highest-value configurations for knowledge-intensive enterprise tasks [3].

How AI Agents Communicate With Each Other

The intelligence of a multi-agent system is only as effective as its communication infrastructure. AI agents collaborating on tasks must exchange information accurately, efficiently, and without creating coordination overhead that negates the benefits of parallelism. In 2026, four primary communication models define how autonomous AI teams share state, knowledge, and task signals.

| Communication Model | How It Works | Best Suited For |

| Direct Messaging | Agents exchange point-to-point messages with task updates | Fast bilateral coordination between known agents |

| Blackboard System | Shared repository all agents read from and write to | Complex problems requiring collective knowledge |

| Market-Based Bidding | Agents bid for tasks based on capability or availability | Dynamic workload balancing across large agent pools |

| Broadcast Protocol | One agent sends updates to all others simultaneously | Synchronisation and real-time status alignment |

The choice of communication model has direct implications for system performance. Direct messaging is fast and precise, but creates dependency on bilateral relationships. Blackboard systems enable collective intelligence at the cost of access management complexity. Market-based coordination handles dynamic task allocation elegantly but requires agents with well-calibrated utility functions. Most production multi-agent systems employ a hybrid of these models — using direct messaging for time-critical signals, blackboard access for shared knowledge, and market-based bidding for flexible workload distribution.

Task Distribution Between Agents

Effective task distribution is the operational core of any functional multi-agent system. The question is not simply which agent does what — it is how the system makes that determination dynamically, adapts when agents fail or become overloaded, and ensures the collective output is coherent rather than fragmented. This is what the distributed AI agent architecture must solve.

Role Assignment Strategies

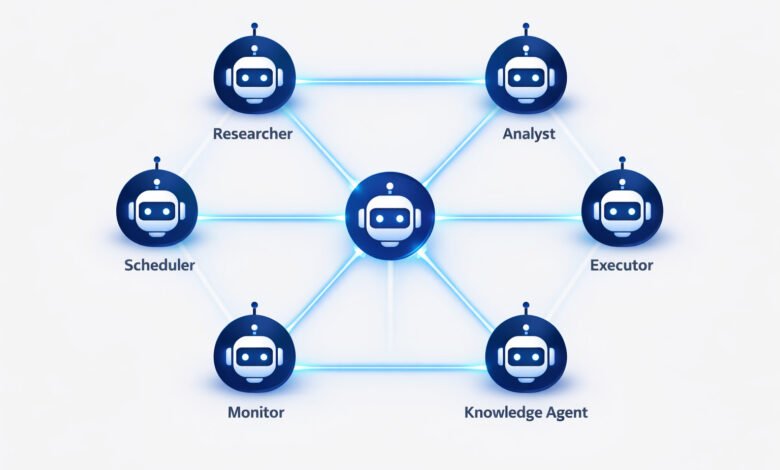

- Static role assignment: Agents are pre-assigned fixed roles — Coordinator, Researcher, Analyst, Executor, Monitor — that remain constant throughout the workflow. This is appropriate for well-understood, repeatable task structures.

- Dynamic role assignment: An orchestrating agent evaluates each sub-agent’s current capacity and capability before assigning each task — redistributing work in real time if an agent becomes overloaded or fails.

- Market-based self-selection: Agents autonomously bid for tasks based on their available capacity and declared capability — enabling decentralised load-balancing without requiring a central coordinator.

The Orchestrator-Worker Pattern

The most widely deployed multi-agent architecture in commercial systems is the orchestrator-worker pattern. A master orchestrator agent — powered by a high-capability LLM — is responsible for goal interpretation, task decomposition, role assignment, and result synthesis. Worker agents, each specialised for a specific function, receive subtasks, execute them, and return outputs to the orchestrator. This pattern is explicitly described by DeepLearning.AI as the primary model for improving LLM performance through agentic architectures [4] — and it forms the foundation of commercial deployments in platforms such as Microsoft Copilot [7].

Real-World Applications of Multi-Agent Systems

The following examples of multi-agent AI systems are drawn from active commercial deployments and high-confidence development contexts in 2026 — grounding the architectural concepts above in observable, measurable outcomes.

Autonomous Logistics and Supply Chain

In autonomous logistics networks, AI agents collaborating on tasks coordinate delivery route optimisation, real-time vehicle rerouting based on traffic and load conditions, inventory replenishment triggers, and supplier communication — simultaneously. Each agent handles its designated domain; the orchestrator synthesises outputs into a continuously updated logistics plan. The result is a level of real-time adaptive supply chain management no single-agent or traditional automation system can match.

Financial Trading and Risk Analysis

In quantitative finance, multi-agent trading systems deploy specialised agents for market monitoring, technical analysis, fundamental data processing, risk assessment, and trade execution — each operating in parallel and exchanging signals through a shared coordination layer. The collective system responds to market events at a speed and analytical depth that sequential, single-agent approaches cannot achieve. McKinsey identifies this domain as one of the highest-value enterprise applications of autonomous AI teams [3].

Healthcare Diagnosis Support

In clinical decision support, collaborative AI diagnostic systems deploy multiple agents to analyse different data modalities simultaneously: imaging data, lab results, medication history, and clinical notes. Each agent contributes domain-specific analysis to a shared knowledge layer; a synthesis agent consolidates the outputs into a prioritised differential diagnosis for physician review. Stanford’s AI Index documents significant AI performance improvements in medical data processing benchmarks [1], enabling multi-agent healthcare systems to handle complexity previously reserved for specialist consultation.

Smart Manufacturing and Industrial Automation

On smart factory floors, multi-agent manufacturing systems coordinate production scheduling, robotic arm sequencing, quality assurance sampling, maintenance scheduling, and supply triggering — across dozens of interconnected systems simultaneously. When one production line develops a bottleneck, the distributed AI agent architecture dynamically redistributes upstream and downstream tasks to maintain throughput. This level of real-time adaptive coordination is one of the clearest current demonstrations of multi-agent systems outperforming all prior automation approaches.

💡 For more information, explore the complete segments of our AI & Personal Technology Series

Challenges of Coordinating Autonomous AI

A credible assessment of multi-agent systems requires direct engagement with their documented coordination challenges. The benefits of AI agents working together are real — but so are the engineering and governance requirements.

- Communication overhead and latency: As the number of agents in a multi-agent system grows, the volume of inter-agent communication increases. Poorly designed communication protocols can create coordination overhead that negates the efficiency gains of parallelism. Blackboard systems reduce bilateral message volume but introduce complexity in shared-access management.

- Conflict resolution between agents: When two agents generate contradictory recommendations or compete for the same resource, the system requires explicit mechanisms for resolving conflicts. Without them, autonomous AI teams can enter circular negotiation loops or produce inconsistent outputs.

- Security vulnerabilities in distributed systems: A multi-agent architecture with broad access to enterprise systems, financial APIs, and sensitive databases creates a distributed attack surface. A compromised agent could corrupt the shared knowledge layer or trigger harmful actions across the network. The EU AI Act’s high-risk classification framework [6] applies directly to multi-agent deployments in consequential domains.

- Accountability gaps in collective decisions: When a multi-agent system produces an erroneous output — an incorrect trade, a flawed diagnosis recommendation, a misdirected logistics action — attributing responsibility across a network of autonomous agents is legally and operationally complex. Full audit trails of each agent’s reasoning and actions are a governance requirement, not an optional feature.

- Emergent behaviour risks: Networks of collaborative AI agents can produce emergent behaviours — outcomes that arise from agent interactions that were not individually anticipated or designed. In high-stakes domains, emergent behaviour without robust monitoring can lead to system-level failures. MIT Sloan Management Review emphasises that the most effective human-AI arrangements maintain meaningful human oversight over consequential agent decisions [5].

The Future of AI Agent Collaboration: 2026–2030

The future of collaborative AI agents is not simply more agents doing more tasks — it is a qualitative shift in what autonomous AI teams can accomplish together, driven by three converging capability frontiers.

Inter-agent marketplaces — ecosystems in which specialised AI agents offer their capabilities as services that other agents can discover, select, and integrate dynamically — are beginning to emerge in research prototypes. By 2028, commercial versions of these AI agent collaboration platforms are likely to reach enterprise deployments, enabling organisations to compose complex multi-agent systems from interoperable specialist agents rather than building monolithic custom architectures.

Edge AI integration will extend distributed AI agent architecture beyond cloud-based systems to local devices and physical environments. Agents operating at the edge — on factory floors, in vehicles, in clinical settings — will collaborate in real time with cloud-based counterparts, enabling multi-agent systems to respond to physical-world events at latencies that cloud-only architectures cannot achieve. Gartner identifies this convergence of edge computing and agentic AI as a top strategic technology trend for 2025–2028 [2].

Governance and regulatory frameworks for multi-agent systems are maturing alongside the technology. The EU AI Act’s risk classification system [6] provides the most developed current reference point, but sector-specific frameworks for financial, healthcare, and critical infrastructure deployments are being developed by regulators globally. Organisations that build audit trails, accountability structures, and human oversight mechanisms into their multi-agent system deployments now will be better positioned as regulatory requirements tighten through 2027–2030.

For a comprehensive view of how these developments are reshaping the broader landscape of AI personal agents and the app ecosystem, learn more in our detailed pillar guide: AI Personal Agents Are Replacing Your Apps Faster Than You Think.

Strategic Comparison: Single-Agent vs Multi-Agent Systems

| Dimension | Single-Agent Workflow | Multi-Agent Systems |

| Problem complexity | Moderate — sequential tasks | Highly distributed across specialised agents |

| Task execution | One step at a time | Parallel, simultaneous across agents |

| Decision-making | Centralised in one model | Collaborative and distributed |

| Scalability | Limited by single-agent capacity | Highly scalable — add agents as needed |

| Resilience | Single point of failure | Fault-tolerant — agents compensate for failures |

| Learning | Limited to one agent’s experience | Shared collective learning across the network |

| Speed | Sequential bottleneck | Parallel execution dramatically reduces time |

| Flexibility | Fixed scope per agent | Dynamic role reassignment on demand |

Key Takeaways

- Multi-agent systems deploy networks of specialised, collaborative AI agents — enabling parallel execution, distributed decision-making, and shared collective learning that single-agent architectures cannot match.

- Understanding how multi-agent systems work in AI requires grasping three structural layers: communication protocols, coordination strategies, and shared learning mechanisms.

- AI agents collaborating on tasks communicate through direct messaging, blackboard systems, market-based bidding, or broadcast protocols — each with distinct trade-offs.

- The distributed AI agent architecture underlying production systems follows the orchestrator-worker pattern — with a coordinator agent managing role assignment, task decomposition, and output synthesis.

- Examples of multi-agent AI systems in production include autonomous logistics networks, financial trading platforms, clinical diagnosis support, and smart manufacturing coordination.

- Security governance, conflict resolution, audit trails, and human oversight checkpoints are non-negotiable engineering requirements for any consequential multi-agent system deployment.

- The future of collaborative AI agents points toward inter-agent marketplaces, edge AI integration, and maturing governance frameworks through 2030.

FAQ

Q1- How do multi-agent systems differ from single-agent workflows?

Multi-agent systems deploy multiple specialised agents that collaborate in parallel — sharing knowledge, distributing tasks, and compensating for individual failures. Single-agent workflows process tasks sequentially through one model, creating bottlenecks and context ceiling constraints that multi-agent architectures structurally resolve.

Q2- How do AI agents collaborating on tasks communicate?

Through four primary models: direct point-to-point messaging for bilateral coordination; blackboard systems for shared knowledge access; market-based bidding for dynamic workload distribution; and broadcast protocols for system-wide synchronisation. Most production multi-agent systems employ a hybrid of these models depending on the type of information being exchanged.

Q3- What are the best examples of multi-agent AI systems in 2026?

High-maturity commercial deployments include autonomous logistics coordination, quantitative financial trading platforms, clinical decision support systems, and smart manufacturing control. All share the same distributed AI agent architecture — specialised sub-agents coordinated by an orchestrator, communicating through a structured shared knowledge layer.

Q4- What is the future of collaborative AI agents?

Three frontiers define the trajectory: inter-agent service marketplaces enabling dynamic system composition; edge AI integration extending real-time coordination to physical environments; and maturing regulatory frameworks requiring auditability and human oversight for high-stakes deployments. Gartner projects these capabilities will reach enterprise maturity between 2027 and 2030 [2].

Q5- Are multi-agent systems safe to deploy in regulated industries?

Safety depends on audit trail completeness, human oversight integration, conflict resolution protocols, and security controls. The EU AI Act [6] provides the most developed current regulatory framework. Regulated-industry deployments require explicit accountability structures — identifying which agent made which decision, with what data, and under what authority — before going to production.

AI & Personal Technology Series

This article is part of the AI & Personal Technology Series — a practical collection of guides exploring how autonomous AI systems are reshaping productivity, privacy, and the future of human-technology interaction.

References

- [1] Stanford HAI — Artificial Intelligence Index Report 2024

https://aiindex.stanford.edu/report/ - [2] Gartner —Top Strategic Technology Trends 2025: Agentic AI

https://www.gartner.com/en/documents/5850847 - [3] McKinsey Global Institute — The Economic Potential of Generative AI

https://www.mckinsey.com/featured-insights/mckinsey-live/webinars/the-economic-potential-of-generative-ai-the-next-productivity-frontier - [4] DeepLearning.AI — How Agents Can Improve LLM Performance

https://www.deeplearning.ai/the-batch/researchers-increasingly-fine-tune-models-on-synthetic-data-but-generated-datasets-may-not-be-sufficiently-diverse-new-work-used-agentic-workflows-to-produce-diverse-synthetic-datasets/ - [5] Harvard Business School Publishing —Collaborative Intelligence: Humans and AI Are Joining Forces

https://hbr.org/2018/07/collaborative-intelligence-humans-and-ai-are-joining-forces - [6] European Commission — EU AI Act — Regulatory Framework for Artificial Intelligence

https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai - [7] Microsoft Research — Copilot and Agentic Systems

https://www.microsoft.com/en-us/microsoft-copilot/copilot-101/copilot-ai-agents