AI-Powered Cyberattacks 2026: 7 Proven Threats Every Business Must Know

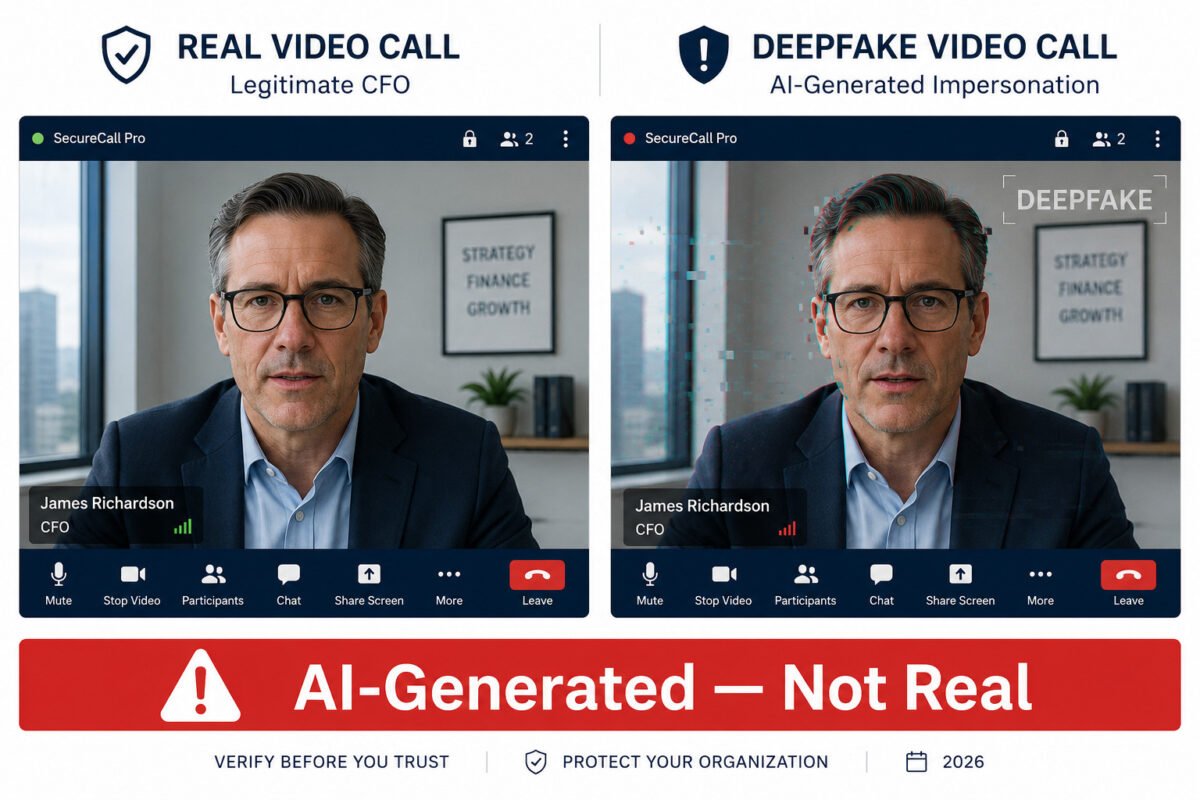

You receive a video call from your CFO requesting an urgent wire transfer. The voice is correct. The face is correct. The context is plausible. And it is entirely fabricated. This is not a theoretical scenario in 2026; it is a documented, recurring incident category. AI-Powered Cyberattacks 2026: 7 Proven Threats Every Business Must Know | TheWeekGeek. The statistics reflect this reality with alarming clarity. AI-powered cyberattacks surged 72% year-over-year, 87% of organizations reported experiencing at least one AI-driven incident, and the average cost of an AI-facilitated breach has reached $5.72 million, 13% above the already elevated overall breach average [2][9]. This guide identifies the seven most consequential AI-powered attack categories your organisation faces in 2026, with the evidence and defensive response each demands.

⚠ TECHNICAL DISCLAIMER This article is for educational and informational purposes only. It does not constitute professional cybersecurity advice, a security audit, or a product recommendation. Organizations should engage qualified cybersecurity professionals before making architectural or policy changes based on this content.

The Scale of AI-Powered Cyberattacks in 2026 — Why This Threat Is Different

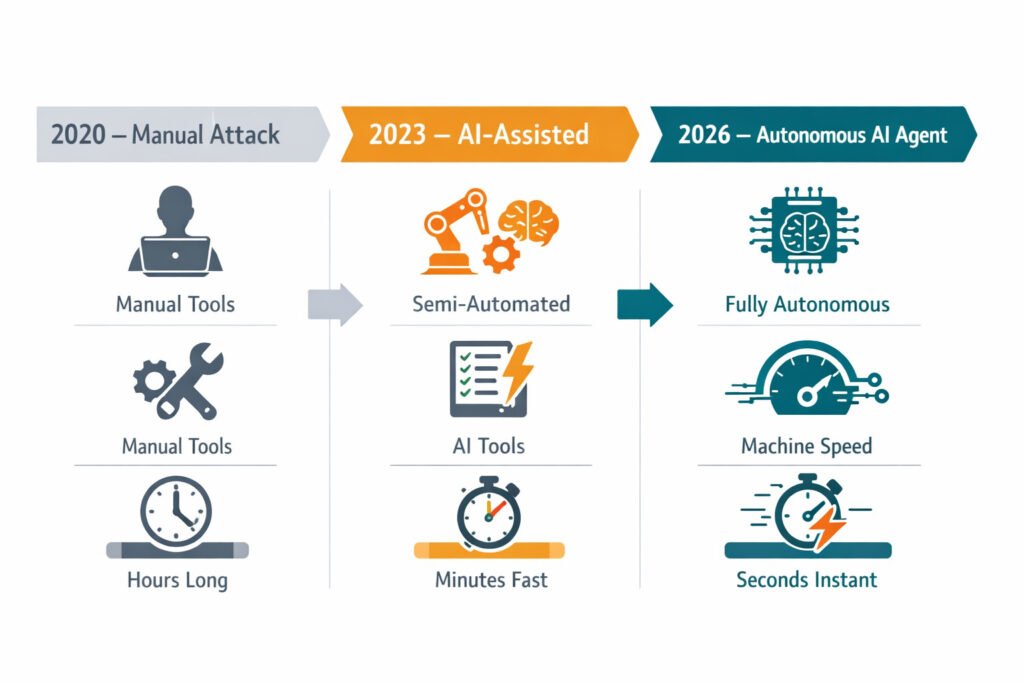

The defining characteristic of AI-powered cyberattacks in 2026 is not sophistication alone — it is velocity. Automated scanning tools now execute 36,000 attack probes per second[9]. Autonomous AI agents can complete the full reconnaissance-to-exploitation sequence within minutes of identifying a target. 82.6% of phishing emails now incorporate AI in some form, text generation, personalisation, or evasion obfuscation [1][8]. The window of opportunity for human-speed defence is closing rapidly.

The IBM X-Force Threat Intelligence Index 2026 documents a 44% year-over-year increase in exploitation of public-facing applications, compounded by supply chain attacks that quadrupled over five years[2]. These are not isolated incidents; they represent a systemic shift in attacker methodology. Adversaries are no longer breaking through individual perimeters. They are targeting the interconnected trust relationships between organizations, vendors, open-source dependencies, identity integrations, and CI/CD pipelines, using AI to identify and exploit those trust relationships at speed.

Table 1: AI-Powered Cyberattack Key Statistics, 2026 Benchmarks

| Metric | Figure | Source | Year-on-Year Change |

| AI-powered attack incidents | 72% surge globally | AllAboutAI / IBM X-Force / Microsoft [2][9] | +72% YoY |

| AI phishing email prevalence | 82.6% of phishing emails | DeepStrike / Verizon DBIR | Accelerating |

| AI phishing click-through rate | 54% vs 12% for human-crafted [3][8]. | Experimental study data | +350% vs. baseline |

| Average AI-facilitated breach cost | $5.72 million | IBM / AllAboutAI | +13% vs. overall average |

| Organizations are unable to match the AI attack speed | 76% | AllAboutAI research | Critical readiness gap |

| Rise in AI-assisted BEC (FBI IC3) | 37% increase | FBI IC3 2025 Report | +37% YoY |

| Deepfake incidents (past 12 months) | 85% of organizations affected | Industry survey | First year as the majority metric |

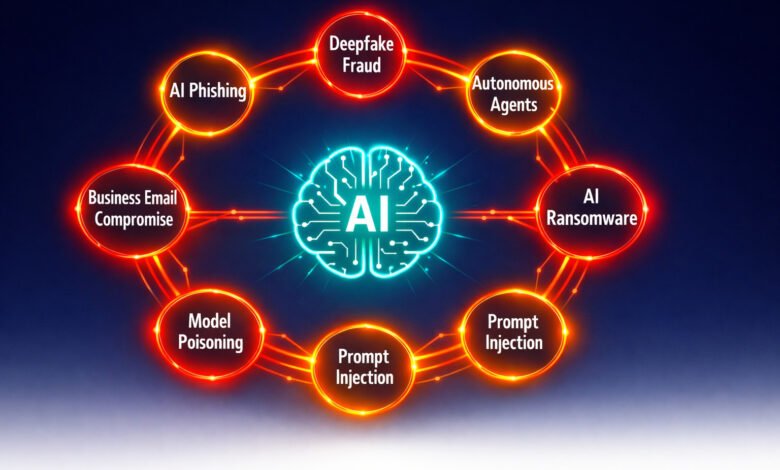

7 Proven AI-Powered Cyberattack Threats Targeting Businesses in 2026

The following seven categories represent the most documented, most consequential, and most rapidly evolving AI-enabled attack types in the current threat landscape. Each is presented with its mechanism, its target profile, and its evidence-based defensive response.

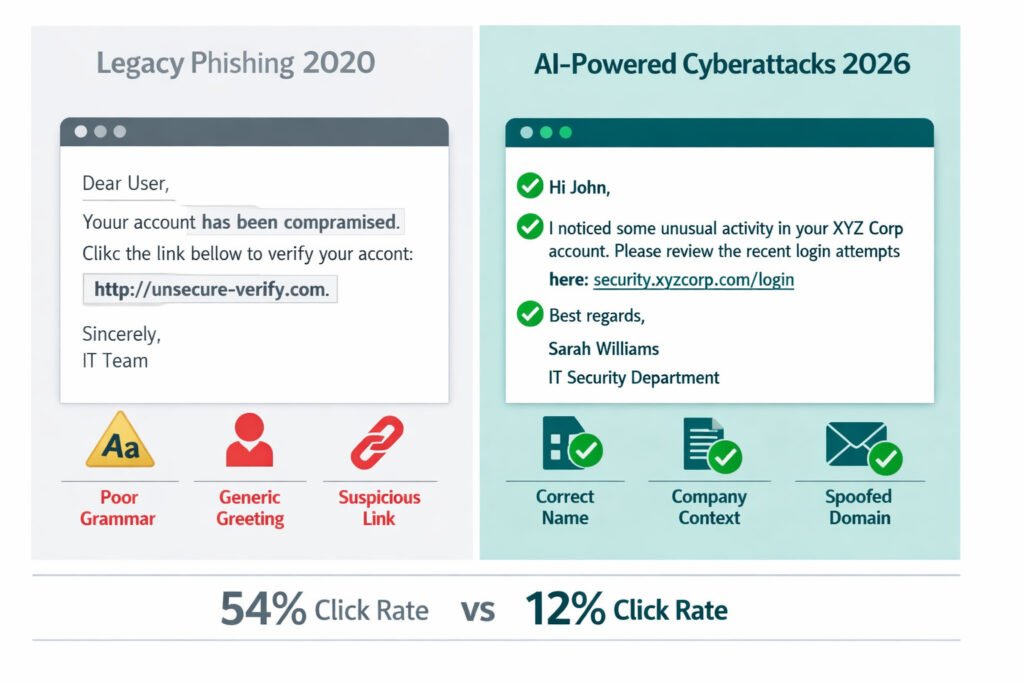

Threat 1— AI-Generated Phishing and Spear Phishing at Scale

Traditional phishing relied on volume and generic lures. AI phishing attacks in 2026 operate on a fundamentally different model: large language models synthesise publicly available information, LinkedIn profiles, company announcements, regulatory filings, and social media activity to craft hyper-personalised messages that are contextually accurate, grammatically flawless, and tonally matched to the target’s communication style. The result is a 54% click-through rate for AI-generated phishing emails, compared to 12% for human-crafted attempts [3][8]. This aligns with findings from Verizon’s DBIR and Microsoft security research, which consistently identify phishing as the dominant initial access vector, now significantly amplified by AI-driven personalization.

The 1,265% surge in phishing attacks linked to generative AI reflects both the accessibility of these tools and the collapse of the traditional indicators, such as poor grammar, generic salutations, and implausible scenarios, that security awareness training has historically relied upon to help employees identify fraudulent messages. AI phishing attacks now routinely bypass these heuristics entirely.

Primary targets: Executives, finance personnel, HR teams, and IT administrators with elevated access privileges.

Defensive priority: Phishing-resistant MFA, AI-powered email filtering capable of semantic analysis, updated security awareness training that simulates AI-generated lures rather than legacy phishing templates.

Threat 2 — Deepfake Fraud: Synthetic Identity and Executive Impersonation: Generative AI has achieved near-flawless, real-time synthesis of voice and video. Current deepfake fraud vectors encompass fake CEO impersonation via video conferencing and cloned voice and video for sophisticated social engineering. With 85% of organizations reporting deepfake-related incidents in the past 12 months, industry survey data underscores a critical shift [11]: these are no longer static fabrications, but dynamic, interactive sessions designed to bypass traditional human heuristics.

The execution: The most consequential vector remains the “Authorized Instruction” scam, a synthetic video or audio stream directing finance personnel to initiate immediate capital transfers or credential resets. These attacks exploit human compliance hierarchies, amplified by the perceived authenticity of the digital asset. Microsoft and Trend Micro have both highlighted the rapid maturation of synthetic media attacks, particularly in real-time impersonation scenarios targeting financial workflows.

- Primary targets: Finance controllers, HR gatekeepers, and IT administrators with escalated authorization.

- Defensive priority: Mandating out-of-band (OOB) verification for all high-stakes instructions and deploying AI-native Fake detection layers.

Threat 3 — Autonomous AI Agents as Attack Vectors

The autonomous AI agents’ cybersecurity threat represents perhaps the most consequential structural shift in the 2026 threat landscape. AI agents — systems capable of executing multi-step tasks with minimal human supervision — are now being deployed offensively to conduct full attack sequences: reconnaissance, vulnerability identification, exploitation, lateral movement, and data exfiltration, with minimal human direction and at speeds no human defensive team can match.

In a documented red-team public competition, 60,000 out of 1.8 million prompt-injection attacks against AI agents succeeded in causing policy violations, including unauthorised data access [5]. Palo Alto Networks and IBM both identify non-human identity exploitation, targeting the service accounts, API tokens, and OAuth grants used by AI agents, as the most rapidly growing attack vector in enterprise environments. 80% of current enterprise security stacks are unprepared to detect autonomous agent-based attack patterns. IBM and Microsoft security analyses increasingly emphasize non-human identities and autonomous systems as a critical, under-secured attack surface in modern enterprise environments.

Primary targets: AI-enabled workflows, API endpoints, CI/CD pipelines, cloud infrastructure with non-human identity access.

Defensive priority: Zero Trust principles extended to non-human identities, AI Security Posture Management (AI-SPM) tools, prompt injection testing integrated into AI deployment pipelines, and agent activity monitoring with anomaly detection.

Threat 4 — AI-Enhanced Ransomware and Automated Target Selection

Ransomware has absorbed AI capabilities across every phase of its attack lifecycle. AI ransomware in 2026 begins with automated reconnaissance: machine learning models analyse a target’s public digital footprint to identify the highest-value data assets, the most vulnerable credential surfaces, and the optimal timing for attack execution. In the exploitation phase, AI tools accelerate vulnerability identification and lateral movement. In the encryption phase, AI selects target file types and systems to maximise operational disruption and negotiating leverage.

Global damage costs from ransomware multi-stage extortion attacks are forecast to reach $74 billion in 2026 [6]. The RaaS ecosystem has industrialised this capability: AI-enhanced attack toolkits are available to affiliate networks with minimal technical expertise required. A business or consumer is projected to be struck by ransomware every two seconds by 2031, a frequency that underscores the non-negotiable urgency of resilience-by-design. Trend Micro and Microsoft reports both highlight the industrialization of ransomware through automation and AI-assisted targeting, reducing the skill barrier for attackers

Primary targets: Healthcare, critical infrastructure, SMBs, education, sectors combining high-value data with historically under-resourced security postures.

Defensive priority: Immutable offline backups with verified recovery time objectives, endpoint detection and response (EDR), network micro-segmentation, and AI-powered behavioural analytics to detect lateral movement before encryption begins. Any AI ransomware defence strategy for 2026 must treat immutable offline backups, EDR, network micro-segmentation, and AI-powered behavioural analytics as non-negotiable baseline requirements — not optional enhancements layered onto legacy tooling.

Table 2: AI-Powered Cyberattack Types, Severity, Target Profile, and Defensive Response

| Threat Type | AI Capability Used | Severity (2026) | Primary targets | Top Defensive Control |

| AI Phishing / Spear Phishing | LLM personalisation, evasion, and obfuscation | Critical | All employees, executives | Phishing-resistant MFA + AI email filtering |

| Deepfake Fraud | Real-time voice/video synthesis | Critical | Finance, HR, C-suite | Out-of-band verification protocols |

| Autonomous AI Agents | Multi-step autonomous exploitation | Emerging, High | AI systems, APIs, non-human IDs | Zero Trust for NHIs + AI-SPM |

| AI-Enhanced Ransomware | Automated recon, target selection | Critical | Healthcare, SMBs, infra | Immutable backups + EDR + segmentation |

| Prompt Injection | LLM input manipulation | High | AI-enabled applications | Input validation + sandboxing |

| Model Poisoning | Training data contamination | High, Growing | AI/ML development pipelines | Adversarial testing + data provenance |

| Business Email Compromise (AI) | Context-aware impersonation at scale | Critical | Finance, procurement | DMARC + callback policy + MFA |

Threat 5 — Prompt Injection Against AI-Enabled Applications

As organizations integrate large language models into business workflows, customer service systems, internal knowledge bases, coding assistants, and document processors, they inadvertently create a new attack surface. Prompt injection exploits the inability of current LLMs to reliably distinguish between legitimate instructions and malicious inputs embedded in processed content. An attacker who can insert instructions into a document, email, or web page that an AI system will process can potentially redirect that system to exfiltrate data, execute unauthorised actions, or bypass access controls.

Trend Micro’s 2026 security predictions identify prompt injection as one of the defining emerging threats of the year, particularly as agentic AI systems, those with tool-use capabilities, become more prevalent in enterprise environments. The consequence of a successful prompt injection against an agent with file system access, email capabilities, or database permissions can be equivalent to a full account compromise.[7]

Primary targets: Organizations using LLM-powered applications, AI agents with tool-use capabilities, and customer-facing AI systems.

Defensive priority: Input validation and sanitisation for all AI-processed content, sandboxing of AI agent execution environments, least-privilege permissions for all AI system integrations, and adversarial prompt testing as part of AI deployment pipelines.

Threat 6 — AI-Accelerated Business Email Compromise

Business email compromise (BEC), fraudulent email instructions directing financial transactions, has been amplified dramatically by generative AI cyber threats. The FBI IC3 2025 report documented a 37% rise in AI-assisted BEC [10], reflecting the ability of LLMs to generate contextually accurate impersonation emails at volume, in multiple languages, and with a level of situational awareness previously achievable only by highly skilled human social engineers.

AI-assisted BEC combines email impersonation with intelligence gathered from corporate communications, social media, and financial filings to produce operationally plausible requests: the correct vendor name, transaction amount, urgency framing, and escalation path. Detection rates for AI-powered BEC through traditional email filtering are significantly lower than for legacy BEC attempts.

Primary targets: Finance and accounting teams, procurement personnel, and any employee with payment authorisation.

Defensive priority: DMARC email authentication, AI-powered email security that analyses semantic content, not just metadata, mandatory callback verification to known numbers for all payment instruction changes, and strict dual-authorisation controls for transactions above defined thresholds.

Microsoft threat intelligence further confirms the rise of AI-enhanced business email compromise, with increasingly convincing impersonation and multilingual attack capabilities.

Threat 7 — AI Model Poisoning and Supply Chain Attacks on ML Pipelines

As organizations build AI capabilities into their products and operations, the training data, model weights, and development pipelines that underpin those capabilities become high-value attack targets. Machine learning malware, specifically model poisoning, involves contaminating AI training data or model weights to introduce systematic biases, backdoors, or misbehaviours that activate under attacker-controlled conditions.

IBM and Microsoft research both indicate that supply chain compromise is expanding into AI model pipelines, where poisoned data or models can introduce persistent, hard-to-detect backdoors.

The IBM X-Force 2026 Index documents that supply chain breaches quadrupled over five years, with the most sophisticated attacks targeting trusted integrations, including AI development toolchains and open-source model repositories [2]. An organisation that deploys a poisoned model into production has effectively installed a sophisticated insider threat with no visible footprint. Model poisoning represents the convergence of supply chain risk and AI risk, two of the most consequential threat categories in cybersecurity 2026.

Primary targets: Organizations building or deploying AI models, users of open-source model repositories, and enterprises integrating third-party AI components.

Defensive priority: AI Security Posture Management (AI-SPM) tools, data provenance tracking, adversarial red-teaming of deployed models, software bill of materials (SBOM) extended to AI components, and cryptographic verification of model integrity before deployment.

Table 3: AI-Powered Cyberattack Risk by Industry Sector, 2026 Profile

| Industry Sector | Primary AI Threat Vector | Risk Level | Key Vulnerability | Priority Defence |

| Healthcare | AI Ransomware + Deepfake fraud | Critical | Legacy systems, patient data value | EDR, immutable backups, MFA |

| Financial Services | AI BEC + Deepfake CEO fraud | Critical | High-value transactions, wire transfers | Out-of-band verification, DMARC, dual auth |

| Technology / SaaS | Prompt injection + Model poisoning | Critical | AI integrations, developer pipelines | AI-SPM, adversarial testing, SBOM |

| Small-Medium Business | AI Phishing + RaaS ransomware | High | Limited security resources, credential reuse | Phishing-resistant MFA, managed EDR |

| Critical Infrastructure | Autonomous agents + Supply chain | Critical | OT/IT convergence, legacy ICS | Network segmentation, zero trust OT |

| Education | AI Phishing + Credential theft | High | Open environments, diverse user base | MFA, security awareness, email filtering |

| Legal / Professional Services | AI BEC + Data exfiltration | High | Client confidentiality, wire instructions | Email authentication, callback protocols |

Deconstructing the AI-augmented cyber threats Narrative: Challenges, Discourse, and Resolutions

A rigorous treatment of this topic requires honest engagement with the counterpoints to current threat framing.

| Challenge | Discourse | Resolution |

| Statistical Inflation (Vendor Hype) | Vendors have incentives to exaggerate, but FBI and Verizon data are independent and confirm a 72% surge in incidents. | Prioritize independent validation from non-commercial sources before finalizing internal risk assessments. |

| Targeting Scope (Enterprise Only) | Advanced agents target “big fish” now, but RaaS makes these tools a horizontal threat to SMBs via automated phishing. | Treat the threat as an imminent 18–24 month risk for SMBs; implement AI-resistant protocols before tools become commodities. |

| Defensive Lag (Pace of Innovation) | The gap is real (76% can’t match attack speed), yet AI-enabled firms contain breaches 98 days faster on average. | Shift to AI-native security platforms rather than incremental legacy upgrades to achieve high-speed automated response. |

| Detection Reliability (Deepfakes) | Software isn’t a “silver bullet” and shows false negatives against sophisticated, real-time synthesis models. | Treat detection as a secondary corroborating control; rely on out-of-band verification for all high-stakes authorizations. |

.

💡 For more information, explore the complete segments of our AI Threats Security Series.

Building Your AI-Powered Cyberattack Defence, Practical Priorities for 2026

This prioritisation aligns with frameworks and threat intelligence from IBM, Microsoft, Verizon, and Trend Micro, all of which emphasize identity security, email protection, and continuous validation as primary defensive pillars

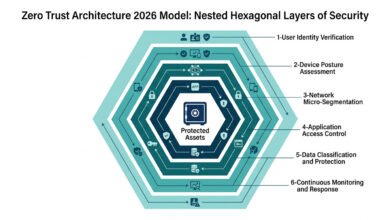

The breadth of the AI-powered cyberattack landscape in 2026 can make prioritisation feel overwhelming. The following framework, drawn from NIST CSF 2.0 and CIS Controls v8, offers a sequenced approach scaled to resource availability.

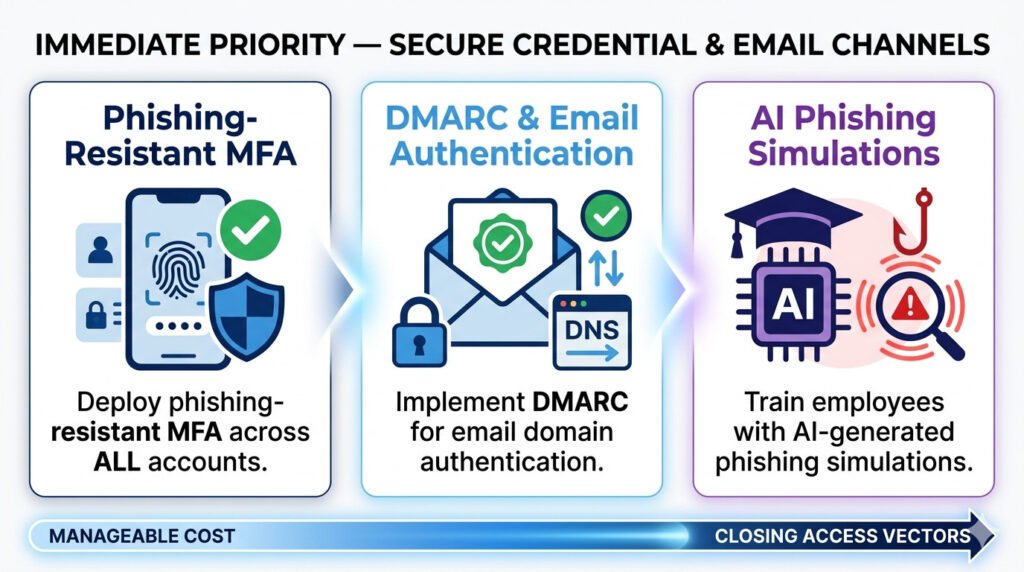

Immediate Priority: Close the Credential and Email Attack Surface

The majority of AI-powered attacks in 2026 still begin with credential theft or email compromise. Deploying phishing-resistant MFA across all accounts, implementing DMARC email authentication, and updating security awareness training to include AI-generated phishing simulations closes the most prevalent initial access vectors immediately and at a manageable cost.

Strategic Priority: Govern Your AI Attack Surface

Every AI tool your organisation deploys, whether a coding assistant, a customer service chatbot, or a document processor, is a potential attack surface. Conducting an AI inventory, applying least-privilege permissions to all AI integrations, and implementing AI Security Posture Management tooling creates the governance foundation required to operate safely in an AI-integrated environment.

Continuous Priority: Test at Machine Speed

AI-powered attacks iterate faster than annual penetration tests can track. Continuous automated vulnerability scanning, AI-specific red team exercises that simulate deepfake fraud and prompt injection scenarios, and adversarial testing of deployed AI models should be treated as operational disciplines, not periodic events. Organizations that test at the speed of attack are the ones most likely to contain and survive incidents.

KNOWLEDGE ASSESSMENT QUIZ:

How Exposed Is Your Organisation to AI-Powered Cyberattacks? : AI Threat Readiness

Disclaimer: This quiz is for self-awareness only and does not constitute a clinical assessment, security audit, or professional recommendation.

Q1: Has your organisation updated its security awareness training in the last 12 months to include AI-generated phishing simulations?

A) Yes, simulations include AI-generated lures and deepfake scenarios.

B) Yes, but training covers legacy phishing templates only.

C) Training has not been updated in over 12 months.

D) We do not have a structured security awareness training programme.

Q2: Does your organisation have a documented out-of-band verification protocol for financial transaction instructions received digitally?

A) Yes, mandatory callback to pre-registered numbers for all payment changes

B) Informal, some employees follow this practice, but it is not policy

C) No formal protocol exists

D) We have not considered this control

Q3: Has your organisation conducted an inventory of AI tools in use across all departments?

A) Yes, full inventory with permissions review and governance policy

B) Partially, we know the major tools, but not the departmental shadow AI

C) No formal AI inventory has been conducted

D) We are unaware of the extent of AI tool usage in our organisation.

Q4: How does your organisation currently detect deepfake fraud attempts?

A) Combination of AI detection tools and mandatory out-of-band verification policy

B) We rely on employee judgment and awareness training

C) We have no specific deepfake detection capability

D) We have not assessed our exposure to deepfake fraud.

Q5: Does your incident response plan include specific scenarios for AI-powered attacks (autonomous agents, deepfake CEO fraud, AI ransomware)?

A) Yes, tabletop exercises conducted within the last 12 months

B) Our IR plan exists but has not been updated for AI threat scenarios

C) No formal IR plan exists

D) We have not tested our response to any cyberattack scenario.

Score Interpretation:

Mostly A: Your organisation has strong foundational defences against AI-powered cyberattacks. Focus on continuous testing and AI governance maturity.

Mostly B: Partial controls are in place. Prioritise updating training for AI-generated threats, formalising verification protocols, and conducting an AI inventory.

Mostly C/D: Significant exposure exists. Engage a qualified cybersecurity professional to conduct a structured gap assessment before the next incident occurs, not after.

Final Thoughts

AI-augmented threats in 2026 are not a single threat; they are a threat philosophy: automate everything, personalise at scale, operate at machine speed, and exploit the human and non-human identities that hold the keys to your most valuable assets. The seven categories documented here, AI phishing, deepfake fraud, autonomous agent attacks, AI ransomware, prompt injection, model poisoning, and AI-assisted BEC, are not discrete risks to be addressed individually. They share a common dependency: the absence of AI-native defences, identity governance, and continuous validation.

The organisations that navigate this threat environment successfully are those who resist the temptation to respond to AI-powered threats with incremental upgrades to legacy tooling. Generative AI cyber threats require a structural response: phishing-resistant identity controls, zero trust architecture, AI governance, and a culture of verification. None of this is beyond reach. All of it requires commitment.

FAQ

Q1: What makes AI-powered cyberattacks more dangerous than traditional attacks?

A: Three factors distinguish these attack vectors: scale, speed, and personalisation. AI tools execute 36,000 attack probes per second [9], generate hyper-personalised phishing emails at volume, and enable autonomous agents to complete full attack sequences in minutes. Traditional attacks required skilled human operators operating at human speed. AI-powered attacks operate at machine speed with minimal human oversight, a pace that outstrips conventional human-speed defensive responses.

Q2: How do AI-generated phishing emails differ from traditional phishing?

A: Traditional phishing is characterised by generic salutations, poor grammar, implausible scenarios, and suspicious links. AI phishing attacks in 2026 use large language models to synthesise publicly available information about targets, producing contextually accurate, grammatically perfect messages that reference real colleagues, real projects, and real organisational contexts. The 54% click-through rate for AI-generated phishing versus 12% for human-crafted attempts reflects how effectively these attacks eliminate traditional detection heuristics.

Q3: Do AI-powered cyberattacks target small businesses?

A: Yes, disproportionately so. Small businesses face disproportionate AI-driven attack exposure. The RaaS ecosystem makes AI-enhanced attack toolkits accessible to low-skill threat actors, and SMBs present an attractive combination of high-value data and lower security maturity. AI-powered cyberattacks do not discriminate by organisation size; they optimise for vulnerability and return on attack investment, both of which frequently favour SMB targets.

Q4: What is prompt injection, and why is it a major concern in 2026?

A: Prompt injection is an attack that exploits an AI system’s inability to reliably distinguish legitimate instructions from malicious inputs embedded in processed content. When an AI agent processes a document, email, or web page containing injected instructions, it may execute those instructions, accessing data, sending messages, or taking actions outside its intended parameters. As organizations deploy agentic AI with tool-use capabilities, prompt injection becomes equivalent in severity to code injection against traditional applications.

Q5: How should organisations prioritise their response to AI cyber threats, given limited budgets?

A: Budget-constrained organizations should sequence defences by attack frequency and impact. The highest-return immediate investments are: phishing-resistant MFA (which closes the most prevalent credential theft vector), DMARC email authentication (which reduces AI-assisted BEC success rates), and updated security awareness training that simulates AI-generated lures. These three controls address the attack vectors responsible for the majority of successful AI-powered compromises at relatively low total cost.

Q6: What is AI Security Posture Management (AI-SPM), and do we need it?

A: AI-SPM tools continuously monitor AI deployments for security misconfigurations, excessive permissions, data exposure risks, and model integrity violations. Organizations that deploy AI models, use AI agents with tool-use capabilities, or integrate third-party AI components into production workflows should treat AI-SPM as a necessary complement to traditional application security tooling. For organisations with minimal AI deployments, a manual AI inventory review and permissions audit provides adequate coverage in the near term.

AI Threats Series Overview

This article is part of the AI Threats Security Series — a technical collection of guides exploring the most dangerous AI-powered attack categories, defensive frameworks, and the steps organizations must take to build resilient security postures in 2026.

References

- [1] AllAboutAI. AI Cyberattack Statistics 2026: What the Data Warns Us About.

https://www.allaboutai.com/resources/ai-statistics/ai-cyberattack/ - [2] IBM. X-Force Threat Intelligence Index 2026. IBM Think Insights.

https://www.ibm.com/think/insights/more-2026-cyberthreat-trends - [3] DeepStrike. AI Cybersecurity Threats 2025: Enterprise Risks and Defenses. https://deepstrike.io/blog/ai-cybersecurity-threats-2025

- [4] DeepStrike. AI Cyber Attack Statistics 2025, Trends, Costs, and Global Impact.

https://deepstrike.io/blog/ai-cyber-attack-statistics-2025 - [5] Greshake, K. et al. Not What You’ve Signed Up For: Compromising Real-World LLM-Integrated Applications with Indirect Prompt Injection. arXiv, 2023:

https://arxiv.org/abs/2302.12173 - [6] Cybersecurity Ventures. 2025 Cybercrime Report — Ransomware Damage Cost Projections: https://cybersecurityventures.com/cybercrime-damage-costs-10-trillion-by-2025/

- [7] Trend Micro. The AI-fication of Cyberthreats: Security Predictions for 2026.

https://www.trendmicro.com/vinfo/us/security/research-and-analysis/predictions/the-ai-fication-of-cyberthreats-trend-micro-security-predictions-for-2026 - [8] Verizon. 2025 Data Breach Investigations Report (DBIR).

https://www.verizon.com/business/resources/reports/dbir/ - [9] Microsoft. Microsoft Digital Defense Report 2025.

https://cdn-dynmedia-1.microsoft.com/is/content/microsoftcorp/microsoft/msc/documents/presentations/CSR/Microsoft-Digital-Defense-Report-2025.pdf#page=1 - [10] FBI Internet Crime Complaint Center. 2025 Internet Crime Report (IC3). https://www.ic3.gov/AnnualReport/Reports/2025_IC3Report.pdf

- [11] Entrust. 2025 Identity Fraud Report — Deepfake Incidents: https://www.entrust.com/resources/report/2025-identity-fraud-report