AI Agent Workflows: How Autonomous Systems Execute Complex Tasks Without Apps (2026)

AI Agent Workflows: How Autonomous Systems Execute Complex Tasks Without Apps

Every day, knowledge workers navigate an invisible tax on their productivity: opening one app to retrieve data, switching to another to schedule a meeting, copying outputs between platforms that were never designed to talk to each other. Understanding how AI agent workflows automate tasks across this fragmented landscape is one of the most practically valuable things a technologist or business leader can do in 2026. AI agent workflows do not merely speed up this process — they eliminate it entirely, replacing app-switching with a single autonomous pipeline that interprets, executes, and delivers.

⚠️ Tech Disclaimer: This guide explores 2026 AI trends for educational purposes. AI capabilities and software performance vary by platform; this is not professional, technical, or financial advice. Always verify with certified experts for a critical system

This article explains what AI agent workflows are, how autonomous AI workflows decompose and execute complex tasks, which tools power real deployments, and what the AI agent workflow architecture explained below reveals about where this technology is heading through 2030. The search intent this guide serves is straightforward: clear, educational content for readers who want to understand agentic AI — not just its promise, but its mechanics. According to Stanford’s AI Index 2024, AI performance on planning and instruction-following benchmarks improved substantially between 2022 and 2024 [1], directly enabling the autonomous workflow execution this article examines.

For the macro-level context — how this shift is challenging the entire app ecosystem —explore our full pillar guide: AI Personal Agents Are Replacing Your Apps Faster Than You Think.

What Are AI Agent Workflows?

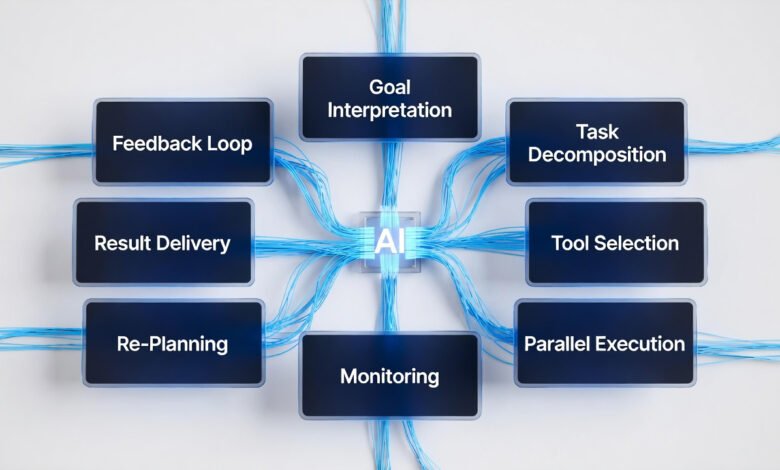

AI agent workflows are structured task pipelines executed by autonomous software agents that combine Large Language Models (LLMs), API integration layers, persistent memory systems, and planning algorithms into a single coordinated system. The defining characteristic is autonomy: the agent does not follow a pre-written script. It reasons through a problem, selects its own tools, monitors its own progress, and adapts when conditions change — all from a single natural-language instruction.

The concept of AI agents replacing app workflows rests on a structural shift in how software is used. Traditional applications execute one function, triggered manually, in isolation. AI agent workflows execute entire objectives — the user states the goal, the agent determines the method, and the pipeline handles every step in between. This is not incremental improvement; it is a different model of human-computer interaction entirely.

Five Core Characteristics of AI Agent Workflows

- Goal-based reasoning: The agent interprets intent — understanding the outcome the user needs, not just the action to trigger.

- Task decomposition: Complex objectives are broken into ordered, dependency-mapped subtasks, executed sequentially or in parallel.

- Dynamic tool selection: The agent selects the most appropriate API or service for each subtask — not locked into one application.

- Adaptive re-planning: When a step fails or returns unexpected results, the agent revises its approach rather than halting.

- Persistent memory: Context, preferences, and prior outcomes are retained across sessions, enabling progressive personalisation.

| 🧠 Knowledge Assessment — AI Agent Workflows Q1: Which component of AI agent workflow architecture enables communication with external APIs, booking systems, and databases? – A) GPU rendering engine – B) API integration layer – C) Static rule database – D) Image processing pipeline Q2: How do AI agent workflows automate tasks differently from traditional scripted automation? – A) They require more human input per step – B) They adapt dynamically using goal-based reasoning – C) They are limited to single-app environments – D) They rely exclusively on pre-coded instructions Q3: What is the term for the process by which an AI agent breaks a complex goal into smaller, ordered, executable units? – A) Memory consolidation – B) API throttling – C) Task decomposition – D) Static workflow parsing |

✅ Correct Answers:

- Q1 → B: API integration layer — enables the agent to interact with external services, booking platforms, and databases in real time.

- Q2 → B: Dynamic goal-based reasoning — unlike rigid scripts, AI agent workflows re-plan when conditions change or steps fail.

- Q3 → C: Task decomposition — the core planning process that converts a high-level goal into an ordered, executable subtask sequence.

How Autonomous Agents Break Tasks Into Subtasks

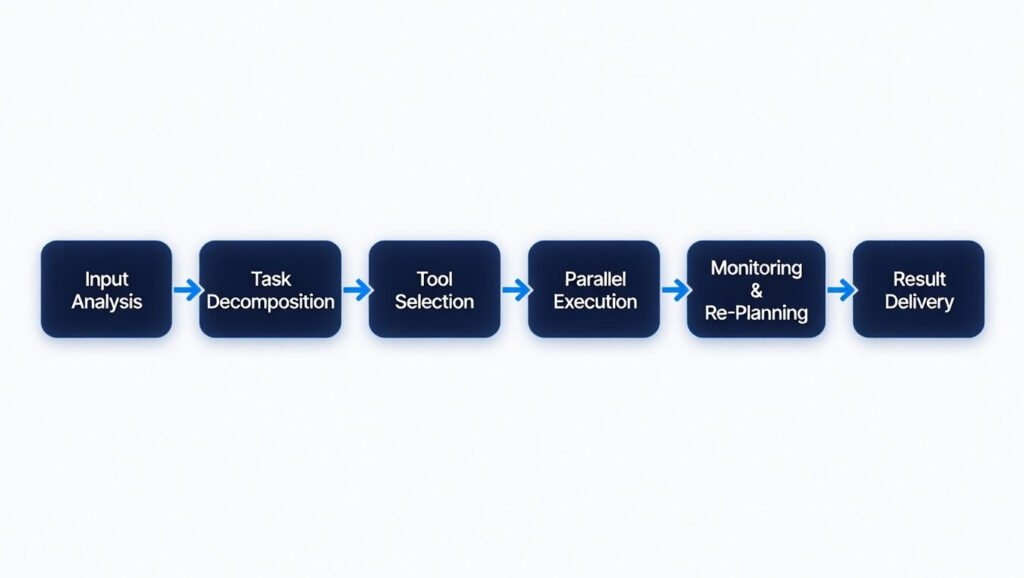

Task decomposition is the process at the core of every autonomous AI workflow. It is what transforms a capable language model into an agent that can actually complete work. When a user provides a complex goal — “Research the top five competitors in our market, summarise their pricing strategies, and schedule a team review meeting” — the agent must translate that into an ordered, executable sequence with clear subtasks, dependencies, and tool requirements.

The decomposition is driven by the agent’s LLM reasoning core, which evaluates the goal against its knowledge of available tools and typical task structures. The output is a task dependency graph: a map of what needs to happen, in what order, and which steps can run in parallel. Independent tasks execute simultaneously; those requiring prior outputs queue accordingly. This parallel capability is what makes AI agent workflows dramatically faster than sequential, manual alternatives.

The Workflow Execution Process

| Step | Stage | What Happens |

| 1 | Input Analysis | The agent receives instructions and interprets the user’s intent via LLM reasoning. |

| 2 | Task Decomposition | The goal is split into ordered subtasks; dependencies are mapped for sequencing. |

| 3 | Tool Selection | For each subtask, the agent selects the most appropriate API or service. |

| 4 | Parallel Execution | Independent subtasks execute simultaneously; dependent ones queue accordingly. |

| 5 | Monitoring | Results are evaluated at each step; failures trigger re-planning automatically. |

| 6 | Result Delivery | Validated outputs are compiled and delivered; outcomes logged to persistent memory. |

McKinsey’s 2024 generative AI analysis identifies this adaptive, goal-driven approach as the highest-value application domain — precisely because it handles tasks too variable for traditional scripted automation [3]. The workflow step table above illustrates exactly how AI agent workflows automate tasks from the moment an instruction is received to the moment a validated result is delivered.

Tools Used in AI Agent Orchestration

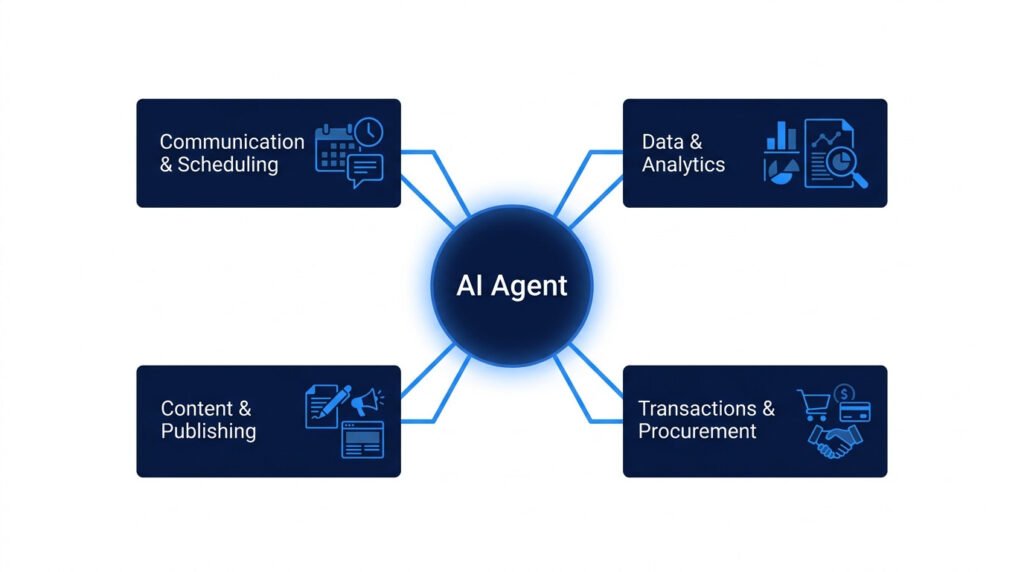

Understanding tools used in AI agent orchestration is essential for evaluating what a deployment can realistically achieve. The API integration layer is what transforms an LLM’s reasoning capabilities into real-world actions — and its breadth directly determines the scope of tasks an AI agent workflow can handle. In 2026, capable deployments will integrate across four primary tool categories.

Communication and Scheduling APIs

Agents connect to email platforms (Gmail, Outlook), calendar services (Google Calendar, Microsoft 365), and video conferencing APIs to manage communications autonomously — reading, drafting, sending, scheduling, and following up without requiring the user to open a single application. Microsoft’s Copilot enterprise deployments demonstrate measurable reductions in time spent on routine communication tasks [4].

Data, Analytics, and Knowledge Platforms

Through connections to database APIs, business intelligence tools, and document repositories, AI data orchestration agents query structured sources, identify patterns, generate visualisations, and produce written analytical summaries. Stanford’s 2024 AI Index documents significant LLM performance improvements on data comprehension benchmarks [1], making autonomous data workflows increasingly reliable for standard reporting tasks.

Content Creation and Publishing Services

Connected to CMS platforms, SEO tools, and social media APIs, AI content workflow agents handle the complete production cycle: interpreting a brief, generating drafts, optimizing for search, scheduling publication, distributing across channels, and monitoring performance — collapsing what once required a multi-person team into a single coordinated AI orchestration system.

Transaction, Procurement, and Enterprise Systems

For rule-governed procurement tasks, agents with access to vendor and e-commerce APIs can monitor pricing, execute approved purchases, manage subscriptions, and flag anomalies. As the EU AI Act’s governance framework matures [6], organisations are building the accountability structures required for autonomous procurement agents in production environments.

The Architecture of Agent-Based Automation

With the AI agent workflow architecture explained below, the distinction between a capable agentic system and a simple chatbot becomes immediately clear. Production-grade agent-based automation does not rely on a single AI model doing everything. It operates across four coordinated architectural layers — each serving a distinct function in the pipeline.

Layer 1 — LLM Reasoning Core

The top layer handles goal interpretation, multi-step planning, natural language generation, and adaptive re-planning. Models such as GPT-4o, Gemini 2.0, and Claude Sonnet 4 power this layer, providing the reasoning capability that makes autonomous AI workflows fundamentally different from scripted automation.

Layer 2 — Planning and Orchestration

This layer manages task decomposition, dependency mapping, and execution sequencing. It determines which subtasks run in parallel, which must queue, and how failures should be handled — transforming the LLM’s reasoning output into an executable AI agent workflow plan.

Layer 3 — API Integration

The connective tissue of any AI orchestration system — enabling real-world actions across external services. The breadth of API connections defines the practical scope of what the agent can do. Gartner projects that by 2028, 33% of enterprise software will include agentic AI functionality [2], with API integration serving as the primary enabler.

Layer 4 — Persistent Memory

Stores user context, prior decisions, preferences, and workflow outcomes across sessions. Without this layer, agents are stateless — re-learning context on every interaction. With it, they build progressively accurate user models that enable the deep personalisation separating a genuinely useful AI agent from a generic automation script. DeepLearning.AI research identifies persistent memory as a key differentiator in agent performance [5].

Multi-Agent Collaborative Architecture

The most advanced configuration extends this four-layer model into a multi-agent collaborative automation architecture for enterprise environments — where specialised sub-agents handle discrete workflow components in parallel, coordinated by an orchestrating agent. This approach parallelises complex, multi-workstream projects at a speed and scale no single-agent system can match, and represents the current research frontier of agent-based automation design [5].

💡 For more information, explore the complete segments of our AI & Personal Technology Series

Examples of Autonomous AI Task Execution in 2026

The following examples of autonomous AI task execution are drawn from current commercial deployments and active development contexts — grounding the architectural concepts above in concrete, observable outcomes.

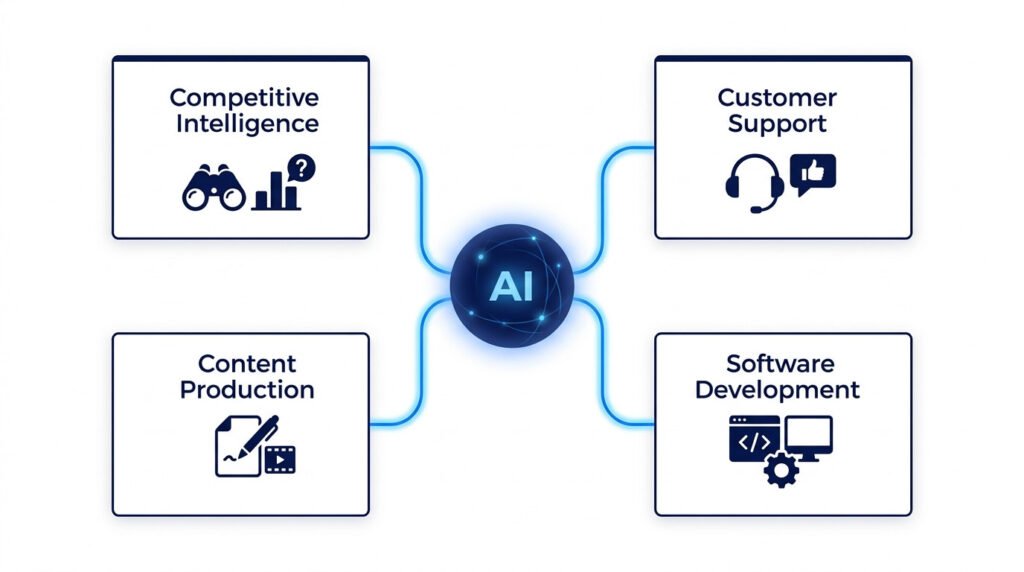

Enterprise Competitive Intelligence

A strategy team instructs an AI research workflow agent: “Summarise all competitor product announcements in our market segment over the last 60 days.” The agent queries news APIs, product databases, and industry publications simultaneously; identifies the most significant developments; cross-references them against the company’s own product roadmap; and delivers a structured brief with source citations. The manual equivalent requires a minimum of two to three analyst-hours — a figure consistent with McKinsey’s analysis of knowledge worker research task duration [3]. The autonomous AI task execution completes it in under five minutes.

Customer Support Triage and Resolution

An AI customer support workflow receives an incoming ticket, queries the CRM for account history, identifies the issue category, retrieves the relevant resolution protocol, drafts a personalised response, schedules a follow-up, and logs the outcome — autonomously for standard cases. Complex cases are escalated with a full context summary pre-prepared. This is the deployment model in Microsoft’s Copilot enterprise integrations [4], delivering measurable reductions in average handling time.

Full-Cycle Content Production

A weekly content brief triggers an AI content orchestration workflow that generates SEO-optimised drafts, formats them for the CMS, schedules publication at analytically optimal times, distributes across social platforms, and delivers a 48-hour performance summary — compressing a previously multi-person, multi-day process into a single autonomous AI workflow cycle.

Software Development Pipelines

AI development workflow agents — commercially represented by GitHub Copilot, Cursor, and Devin — interpret a feature specification, generate code, run test suites, identify failures, revise implementations, and prepare pull requests as part of an integrated AI automation pipeline. This represents the highest-velocity application area for AI agent workflows in 2026, with tooling maturing faster than any other domain.

Why AI Agent Workflows Could Replace Traditional Apps

The case for AI agents replacing app workflows is structural, not merely a matter of convenience. Applications are purpose-built for single functions. AI agent workflows are purpose-built for outcomes. As users increasingly think in terms of goals — not tools — the app-switching model introduces friction that autonomous AI orchestration eliminates.

In practical terms, AI agent workflows automate tasks across apps by acting as an intelligent coordination layer above the application stack. In the near term, agents make apps invisible — the user interacts with the agent, not the application. In the medium term, for high-frequency everyday tasks, the apps themselves may become redundant as agents deliver direct results conversationally. Gartner projects a 33-fold increase in enterprise agentic AI deployments between 2024 and 2028 [2] — a trajectory that signals structural change, not incremental adoption.

Counter-Arguments: What Could Limit AI Agent Workflow Adoption?

A credible assessment of AI agent workflows requires direct engagement with their documented limitations.

- Security exposure from broad permissions: Agents with access to email, financial APIs, and databases create significant attack surfaces. A compromised or misaligned autonomous AI workflow could execute harmful actions at scale before human review occurs. The EU AI Act [6] classifies such deployments as high-risk, requiring governance frameworks that remain immature in most organisations.

- Error propagation in multi-step pipelines: A single misinterpretation early in an AI agent workflow can cascade through subsequent steps — completing an entire pipeline incorrectly before any human checkpoint. Robust intermediate monitoring and rollback capabilities are engineering requirements, not optional features.

- Accountability gaps in automated decisions: When an autonomous AI task execution produces a consequential error — an incorrect communication, a wrong transaction — attribution is legally and operationally complex. Full audit trails are mandatory for regulated-industry deployments.

- Overstated near-term reliability: Current AI orchestration systems perform well in structured, information-rich contexts. They remain less dependable in genuinely ambiguous or politically sensitive situations. Harvard Business Review positions the most effective human-AI arrangements as augmentative, not substitutive [7].

- Integration maintenance overhead: Building reliable multi-platform AI agent workflows across dozens of enterprise systems — each with distinct authentication, rate limits, and data formats — introduces engineering complexity that smaller organisations may find difficult to sustain.

The Future of Autonomous Task Execution: 2026–2030

Three capability frontiers will define AI agent workflow development through the end of this decade — each representing a meaningful extension of what autonomous systems can reliably achieve.

Multi-agent collaborative execution will move from research prototypes to commercial products between 2027 and 2028. Systems in which specialised sub-agents operate in parallel under an orchestrating coordinator will execute complex, multi-workstream projects at speeds no single-agent system can match. The practical outcome: tasks currently measured in days may be completed in hours.

Long-horizon planning — sustaining coherent, goal-directed behaviour across days or weeks rather than minutes — will unlock autonomous AI task execution for genuinely complex extended projects. This is where AI agent workflows begin to approach the capability level of a skilled human project manager, rather than an advanced automation script. DeepLearning.AI research identifies this as the critical next threshold for agentic systems [5].

OS-level and device integration will extend agent reach beyond web APIs to local file systems, desktop applications, and device-level functions — covering the full scope of knowledge worker activity. Combined with maturing EU AI Act governance frameworks [6], this will allow organisations to deploy agent-based automation across substantially broader domains with appropriate accountability structures in place.

For a comprehensive view of how this trajectory is reshaping the consumer and enterprise app landscape, learn more in our detailed pillar guide: AI Personal Agents Are Replacing Your Apps Faster Than You Think.

Key Takeaways

- AI agent workflows replace manual, app-by-app task management with autonomous goal-to-outcome pipelines combining LLM reasoning, task decomposition, API integration, and persistent memory.

- Understanding how AI agent workflows automate tasks requires grasping the decomposition process — where complex goals become ordered, parallel-executable subtask sequences.

- The four tools used in AI agent orchestration categories — communication, data, content, and transactions — define the practical scope of what deployments can achieve.

- The AI agent workflow architecture explained across four layers (LLM → Planning → API → Memory) is the structural foundation all capable systems share.

- Real examples of autonomous AI task execution — from competitive intelligence to software development — demonstrate measurable efficiency gains over manual alternatives.

- AI agents replacing app workflows is a structural trend, not a marginal upgrade — projected by Gartner to affect 33% of enterprise software by 2028 [2].

- Security governance, error monitoring, audit trails, and human review checkpoints are non-negotiable requirements for production deployments.

FAQ

Q1: What are AI agent workflows?

AI agent workflows are autonomous task pipelines in which AI systems interpret a goal, decompose it into subtasks, select appropriate tools, execute across multiple platforms, and deliver validated results — without manual human coordination at each step.

Q2: How do AI agent workflows automate tasks differently from traditional tools?

A: Traditional automation executes rigid, pre-coded scripts that halt on unexpected inputs. AI agent workflows use LLM-driven goal reasoning to adapt dynamically — re-planning when steps fail and selecting tools in real time across platforms that traditional scripts cannot bridge.

Q3: What tools are used in AI agent orchestration?

A: The primary tool categories are: communication and scheduling APIs, data and analytics platforms, content creation and CMS services, and transaction and procurement systems. The agent selects dynamically from these based on the requirements of each individual subtask.

Q4: Which industries are leading AI agent workflow adoption in 2026?

A: Enterprise software, financial services, marketing technology, and software development are the highest-maturity domains. Customer support automation is also advancing rapidly, with measurable scale deployments in platforms such as Microsoft Copilot [4].

Q5: Are AI agent workflows safe for enterprise deployment?

A: Safety depends on access scope, audit logging, and governance frameworks. The EU AI Act [6] provides a risk classification reference. Organisations should implement least-privilege permissions, full decision audit trails, intermediate human review checkpoints, and rollback mechanisms before deploying agents in consequential workflows.

AI & Personal Technology Series

This article is part of the AI & Personal Technology Series — a practical collection of guides exploring how autonomous AI systems are reshaping productivity, privacy, and the future of human-technology interaction.

References

- [1] Stanford HAI — Artificial Intelligence Index Report 2024

https://aiindex.stanford.edu/report/ - [2] Gartner — What Is Agentic AI? (Public Summary):

https://www.gartner.com/en/information-technology/topics/agentic-ai - [3] McKinsey Global Institute — The Economic Potential of Generative AI (2024)

https://www.mckinsey.com/capabilities/tech-and-ai/our-insights/the-economic-potential-of-generative-ai-the-next-productivity-frontier - [4] Microsoft — Copilot AI Agents: How They Work in Microsoft 365: https://www.microsoft.com/en-us/microsoft-copilot/copilot-101/copilot-ai-agents

- [5] DeepLearning.AI — How Agents Can Improve LLM Performance

https://www.deeplearning.ai/the-batch/how-agents-can-improve-llm-performance/ - [6] European Commission — EU AI Act — Regulatory Framework for Artificial Intelligence

https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai - [7] Harvard Business Review — Collaborative Intelligence: Humans and AI Are Joining Forces (2018): https://hbr.org/2018/07/collaborative-intelligence-humans-and-ai-are-joining-forces